Corvex

Confidential

Verifiable security for your most sensitive AI workloads

Turning Your Biggest Challenge

into a Competitive Advantage

.avif)

Protect against IP Theft and Data Breaches

- Safely deploy proprietary AI models on third-party infrastructure without the provider “seeing” your model weights

- Run sensitive inference requests with confidence no one else can access or train on your data

.avif)

Accelerate Sales in Regulated Industries

- Enter healthcare, finance, and government with a platform built for security and compliance

- Verifiable, auditable proof of data protection; optional single-tenant VPCs

- HIPAA and SOC2-certified cloud

Confidential Computing: Verifiable Security for Mission-Critical AI

Your Model Weights. Protected by Hardware, not Promises.

Corvex Secure Model Weights delivers hardware-enforced, cryptographically verifiable protection for AI inference on third-party infrastructure, so you never have to trust the operator. The math and the hardware speak for themselves.

.avif)

.avif)

.svg)

Owner-Controlled Key Custody

Your encryption keys never leave your control. The host provisions compute; it never sees your weights.

.svg)

No Trust Required

Trusted Execution Environments ensure your valuable IP is safe from security vulnerabilities in your AI cloud provider’s stack.

.svg)

Regulated Markets, Unlocked

Enhance security for models fine-tuned on healthcare, financial, or defense data. Deploy on-premise or off-premise.

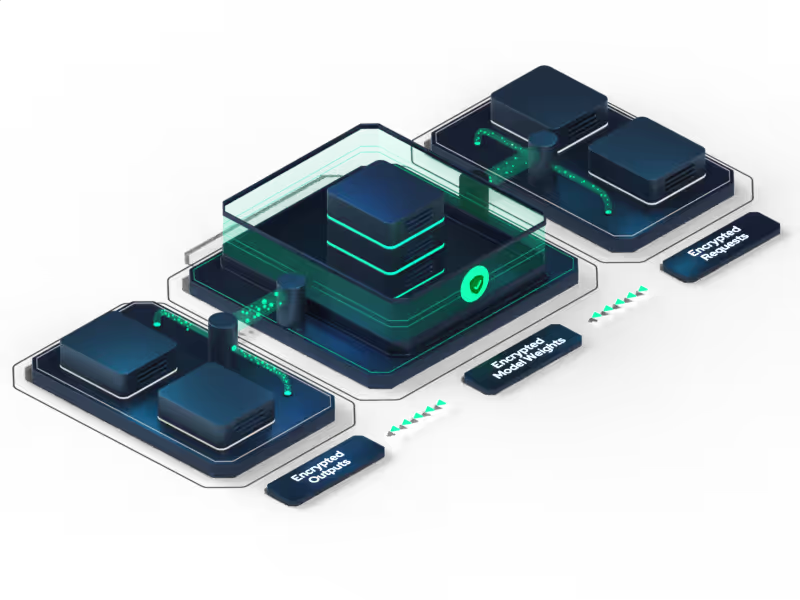

How Corvex Secure Model Weights Works

From encryption to inference — your model weights never leave hardware-enforced protection.

Model Owner Encrypts

Weights & Sets Policy

Before any deployment, you encrypt your model weights using ML-KEM post-quantum key encapsulation; protection designed to be secure against both today's threats and tomorrow's; and define your own attestation policy.

Specifying exactly which hardware configurations and software stacks are permitted to receive a decryption key. The key never leaves your custody.

.png)

Infrastructure is

Cryptographically Verified

Before a key is released, the infrastructure must prove itself. Intel TDX produces a hardware attestation report for each node. NVIDIA GPU Confidential Computing produces a separate attestation of GPU firmware and memory state. A compromised or misconfigured host fails verification and never receives keys.

Key Delivered Directly Into

GPU Memory

Once attestation passes your policy, the Corvex key broker releases your key via post-quantum encrypted network pathway — delivered directly into the hardware-protected GPU memory region. It is never present in system RAM, never accessible to the host kernel or hypervisor, and never seen by the infrastructure operator.

.png)

.png)

Weights Decrypt Inside the

Hardware Boundary

Model weights are decrypted exclusively inside the GPU's Compute Protected Region. At no point do they exist in cleartext outside hardware-protected memory. On Blackwell multi-GPU clusters, NVLink traffic is encrypted inline — the protection holds at any scale.

Inference Runs at Full Scale

Your model serves production traffic on GPU clusters — with near-native performance at 70B+ scale. The infrastructure operator manages compute, uptime, and performance. They never possess your keys, never see your weights, and cannot access them even under legal compulsion.

.png)

Confidential Computing In Action Today

Businesses rely on confidential computing from Corvex to protect proprietary information and intellectual property.

.png)

Healthcare & Biotech

Train and run inference on HIPAA-restricted AI models without on-premise clusters; accelerate IT security approvals.

.png)

Model Builders / SaaS / ISVs

Ship AI models as encrypted artifacts; authorized customers run them; no one can copy weights.

.png)

Finance

Collaborate on fraud models across institutions with keys held by each party; zero data residency conflicts.

.png)

Government

Protect sensitive data with nation-state-level security; accelerate innovation and data collaboration.

How Confidential Computing from Corvex Works

Confidential computing protects your AI workloads using hardware-based security to keep sensitive information private. We leverage Trusted Execution Environments (TEEs) — secure, isolated areas of a GPU — to ensure your code and data are protected and verifiable during execution.

Learn More

Unlocking Healthcare AI: Solve IT Security with Architecture

Unlock the full potential of AI in healthcare by solving the challenge of using PHI securely via system architecture.

Unlocking Healthcare AI: Solve IT Security with Architecture

Unlock the full potential of AI in healthcare by solving the challenge of using PHI securely via system architecture.

.avif)

Confidential Computing has Become the Backbone of Secure AI

The concept of confidential computing is becoming increasingly important. What does that mean, and why does it matter?

.avif)

Confidential Computing has Become the Backbone of Secure AI

The concept of confidential computing is becoming increasingly important. What does that mean, and why does it matter?

Frequently Asked Questions

1. Does encryption slow my model?

Yes, though GPU AES engines keep throughput within approximately 5-8% of plaintext. Individual results may vary.

2. What GPUs support TEEs?

All Corvex nodes use NVIDIA H200 and B200 GPUs with built-in confidential computing.

3. How do I verify isolation?

Download the attestation quote via API before deploying workloads. Share with auditors to prove zero tampering.

4. Is root blocked?

Yes. Corvex can help configure machines so admins have no access.

5. What is a Trusted Execution Environment (TEE)?

A TEE is a secure, isolated area within a processor (like a GPU or CPU). Code and data inside the TEE are invisible to the rest of the system, including the cloud provider. Corvex uses hardware-level TEEs on our GPUs to ensure your AI workload is completely private while it's running.

6. How does remote attestation provide trust?

Remote attestation is a cryptographic process that proves two things: 1) Your workload is running inside a genuine TEE on a secure Corvex machine, and 2) The environment has not been tampered with. This provides a verifiable, auditable receipt of security, forming the basis of a zero-trust architecture.

7. Can Corvex employees access my data?

No. Your data is encrypted at rest, in transit, and while in use. The hardware-based isolation of the TEE makes it technically impossible for anyone outside the secure environment to access the data or the model weights being processed, and that includes our own administrators. This is verifiably enforced by the hardware.

8. Is this compliant with regulations like HIPAA?

Yes. Corvex Confidential provides the core technical controls required to help you build solutions that comply with HIPAA and other compliance mandates. By ensuring data is protected in use, it helps you meet your regulatory obligations for processing sensitive information like Protected Health Information (PHI).

9. Can I use Corvex Confidential in my own cloud or use on premises on private servers?

Yes - Corvex Confidential is available as downloadable software that you can install in your own cloud or on premises.

Start Securing

Your

AI Workloads Today

Protect your generative AI models and sensitive workloads with

Corvex Confidential's Trusted Execution Environments

.png)

-p-500%201.png)

.svg)

.svg)